Learning to See Water

Narratives

In Gunther von Fritsch’s 1944 film, The Curse of the Cat People, a lonely girl invents an imaginary playmate for herself…or does she? The movie was praised by the head of the Child Psychology Clinic at UCLA. He would show the film in class and remark on the brilliant insight of having the child showcase her emotional problems by maintaining an awkward half-smile throughout the picture.

One year he invited Val Lewton, the film’s producer, to attend his lecture. When the professor brought up the smile, Lewton frankly shared the actress held her mouth the way she did because she had just lost a tooth and they didn’t want it to show in the movie. The professor, in other words, was projecting.

When we project, we misattribute traits, qualities, or motivations to others that they don’t actually possess, based on our own assumptions and biases. These “others” could be colleagues, stakeholders, users, customers—anyone. We also project our implicit assumptions and biases, packaged as narratives, onto environments and situations. We do this every time we strategize and problem solve.

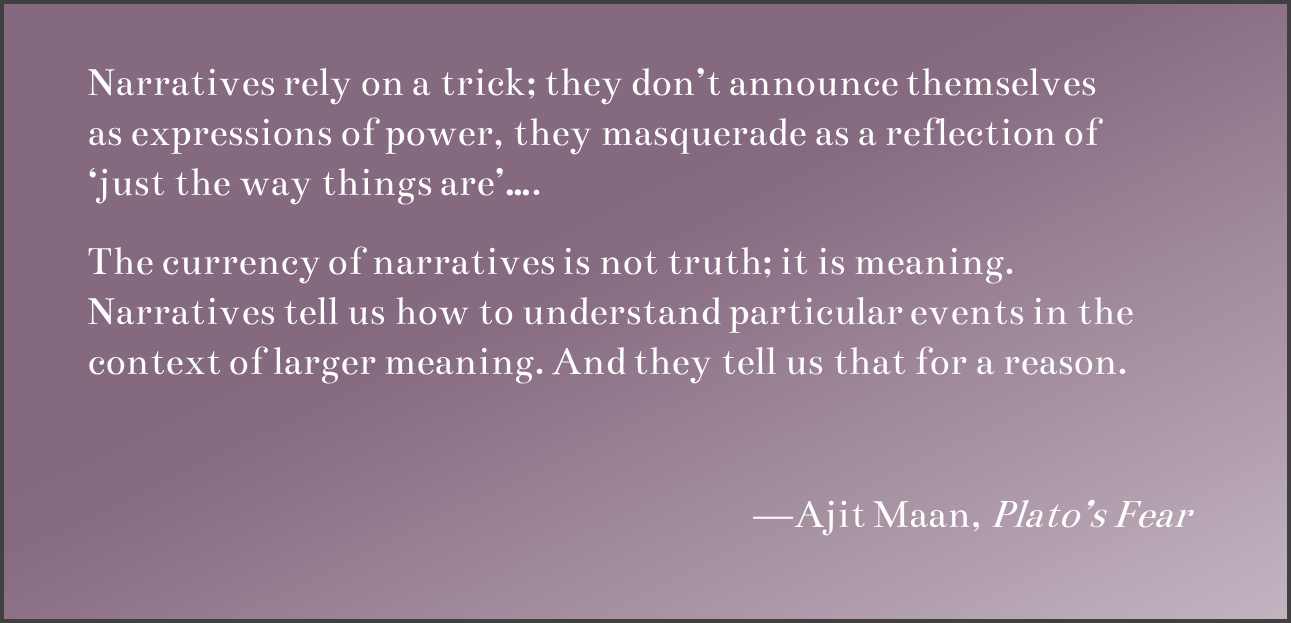

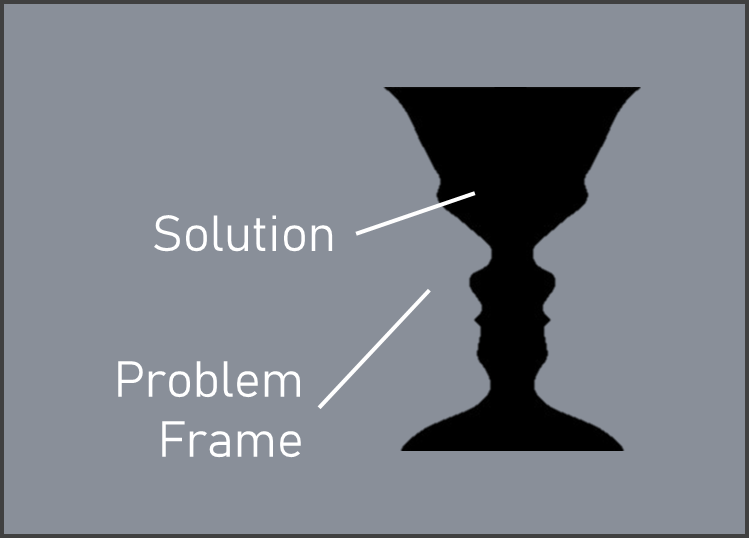

To quote McLuhan’s War and Peace in the Global Village, “One thing about which fish know exactly nothing is water….” McLuhan was writing about technology, but he just as easily could have been describing narratives, the stories we tell ourselves. Borrowing an analogy from Trudi Lang and Rafael Ramírez, narratives are like figure-ground illusions, such as Rubin’s vase (shown below).

Narrative provides the ground—the contextual background—that we use to contrast the figure of interpretation. Our narratives contain the underlying assumptions we use to assign things meaning. Since these assumptions are often hidden, they produce frames that are themselves embedded and unrecognized, guiding our thinking in ways we are not aware of.

This invisibility often deprives us of leveraging them as tools. We also tend to think our current narrative is “just the way things are”, ignoring that those with different backgrounds, values, and identities will always assign different meanings to the same events. In this way, there can be no such thing as an “objective” narrative. All narratives are value laden. Narratizing is an inherently political act.

This is true personally and organizationally. Consider the news. It is less about telling you “the facts” and more about getting you to frame and interpret events in particular ways. (In short, it is trying to organize you.) The same is true of history. To not see its purpose as propaganda is to not see the water. At work narratives are no different. The same quirks that get us in trouble in life are at play there as well.

Ghost Hunting

Executives typically engage in strategic planning from the standpoint of an implicit frame, a single assumed future in which the current strategy would be successful. This is sometimes called the “ghost scenario” precisely because it is unseen. A central point of scenario planning is to move away from this and explore how alternative possible futures should maybe alter thinking. This also applies to product work.

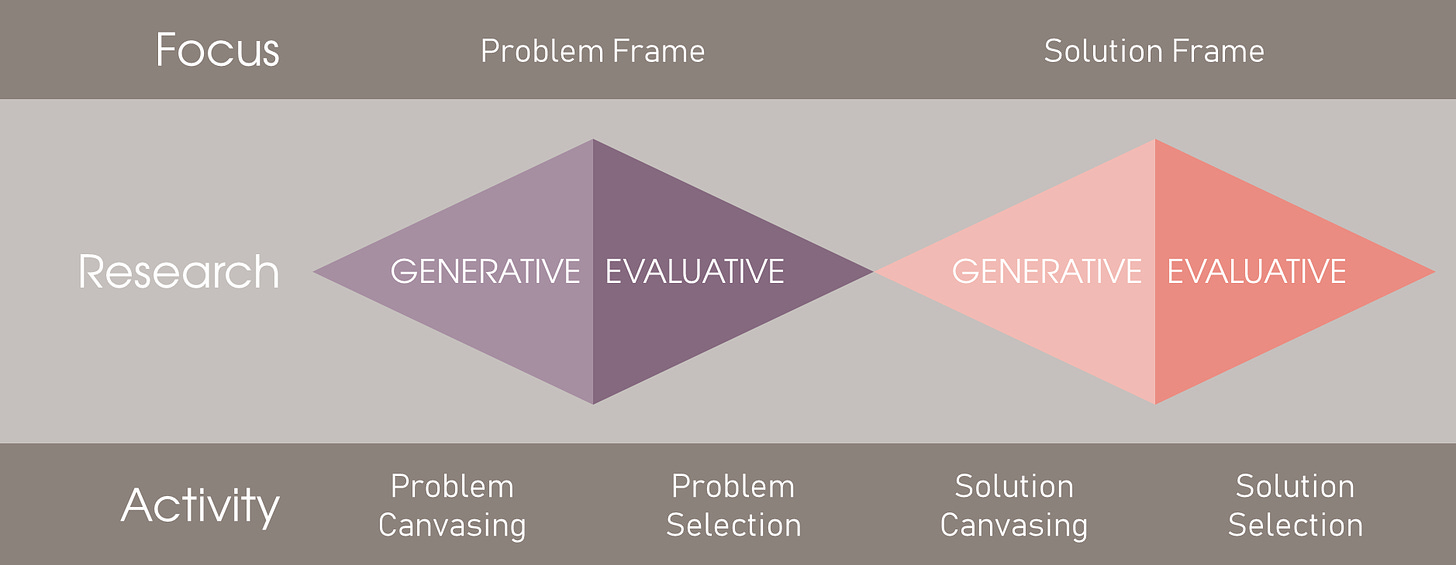

Consider the “double diamond”. The idea is that we should evaluate alternatives and optimize the problem frame before moving into solution space. As noted by design theorist Kees Dorst, this is not how designers work. More to the point, perhaps, it’s also not how our minds work. This is not a knock on the double diamond. In fact, it helps explain why it is useful. It is a prescriptive model, helping to steer us away from how our minds normally function to something more value-adding.

Left to our own devices, we instantly “see” solutions. Solutions presume a problem frame, but this is not where our mind goes. The problem frame is made of hidden assumptions piggybacking our solution thinking—and that makes it a ghost frame. This is also like Rubin’s vase. Here, the solution is akin to the figure (the vase). It is what our conscious mind snaps to. The solution necessitates a problem frame as its ground, but this is largely outside our awareness.

This brings us back to projection. The projection of the implicit problem frame makes the solution as figure “appear”. Adapting a term from Kees Dorst, improving the solution then requires some “archeology”, the digging up and making explicit of the otherwise hidden assumptions in the ghost frame.

This is necessary to apply the double diamond in the first place, else the left diamond remains hidden, which is the norm. In other words, the double diamond is not all that different from scenario planning. To expose the left diamond, the problem frame, you must first go “ghost hunting”. You need to dig up the ghost problem frame and make it visible, so that it can then be contrasted with real alternatives.

Relational Frames

Acceptance and Commitment Therapy, or “ACT” for short, was developed by Steven Hayes based on Relational Frame Theory. One of the main focuses of ACT is how human thinking works.

As Hayes explains in Get Out of Your Mind and Into Your Life, if you show humans a picture of a creature and tell them “This is a Gub-Gub” and “Gub-Gubs say Wooo,” they will also instantly associate Gub-Gub with the picture, Wooo with the picture, Gub-Gub with Wooo (without the picture), and Wooo with Gub-Gub (without the picture). Other animals that can be taught language, like chimpanzees, don’t do this.

In other words, we instantly see things in terms of bidirectional relationships, and these associations spread in a networked fashion. The problem is these relationships are often arbitrary. Hayes illustrates this by having people write down a concrete noun (any object or animal, for example). He then has them write down another concrete noun.

He then asks, “How is the first noun like the second?” When I did this exercise I wrote down “cup” and “car”. I said that a cup is like a car because it is also a vehicle transporting contents from Point A to Point B. But here’s the thing, if the two concrete nouns are random, and people can always think of how one is like the other, then we can always arbitrarily relate things.

As Hayes puts it, our relational frames are “arbitrarily applicable”. This can get us into trouble because of something called “cognitive fusion”. When we associate things, when we frame things in particular ways or use certain metaphors rather than others, we tend to “fuse” our thoughts with the thing. We act like the thing actually has the properties we are projecting onto it! Hayes’ point is that this results in no small amount of psychological suffering.

For our purposes, however, this leads to tunnel vision when strategizing or doing product work. When we “see” a solution, this “figure” is framed by the relational baggage we are projecting onto the situation, serving as its “ground”. If the ghost frame is that something is a “software problem”, then we will not see solutions fitting other frames. If the ghost frame had us seeing the issue as a process improvement problem, or an org design problem, or, to apply Weinberg’s Rule No. 1 of consulting, as a people problem, then our solutioning would follow other paths.

ACT offers another helpful analogy here. The ghost frame, whatever it is, is like a Chinese finger trap. You “escape” a Chinese finger trap by first pushing into it deeper. Similarly here, to loosen the grip of the ghost frame, to create needed degrees of freedom, you first need to lean into and interrogate it. Elicit and capture the assumptions at play. Apply some dialectic debate. We will look at some exercises to do this in future posts.

New Ways of Thinking

It is tremendously value-adding to develop the psychological agility required to leverage narratives and frames as strategic tools. To do this, as the double diamond illustrates, we need to develop different habits, different ways of thinking. We cannot just “think harder”. If our thinking is beset with biases, then applying it more might amplify the biases at play. This is also, incidentally, why financial incentives do not improve decision making.

Asking people to be “objective” also does not work (nor is it possible). Presenting facts is also ineffective. In one of my favorite examples, Lord, Lepper, and Preston (1984) had people either in favor of or opposed to capital punishment assess two studies, one in favor of and one against capital punishment. Both groups on average rated the study supporting their views as being superior. Both groups also walked away feeling more confident in their positions, even though everyone on both sides read the same two (fake) studies.

In other words, if already processing information in biased ways, then new information is just more fuel for the fire. Further, if the new information disconfirms current beliefs, this will often trigger defense mechanisms. When conflicting evidence generates cognitive dissonance, people will often argue against it just to make themselves feel better. They may then even rehearse better defenses against future such challenges to their views.

What has proved effective is “counterfactual reasoning”. Tversky and Kahneman (1973) noted that generating alternative scenarios is not our normal way of thinking—it is a skill that has to be developed like a muscle. Instead of debating “the facts”, we should take a more Socratic approach and guide others through generating their own counter-reasoning.

This brings us back to McLuhan’s water. To really detect an environment, McLuhan argued, necessitates a comparison environment, an “anti-environment”. With our instantaneous narrative projections, we need to develop the habit of fleshing out our own “counter-narratives”.

We saw this in the last post. The more that people flesh out a narrative explaining something, the more they believe it to be true and the more it comes to serve as a ghost frame for new information.

Until next time.