Systems as Mental Interfaces

In today’s post we’ll be discussing Edward Martin Baker’s Scoring a Whole in One. Credit to Sean Murphy, of SKMurphy, Inc., who reached out and asked if I’d like to write something about it. I said yes, and we each wrote up our different takes. Below is mine. And here is the link to Sean’s excellent post. Let’s get started!

Edward Martin Baker was a protégé of W. Edwards Deming. Deming thought Baker’s ideas brought new thinking to the table and encouraged him to write. His first book, Scoring a Whole in One, is an argument against the normal business-school way of running things, instead stressing the importance of taking a holistic, systemic view (hence the “whole” in the title).

Most organizations operate from the assumption that system performance is determined by the independent performance of component parts (such as business units or divisions). This, Baker argues, is a fallacy. He illustrates with a story: An American auto manufacturer knew customers preferred a car made by a Japanese competitor. They didn’t understand why, so they thought they’d do some research.

They bought one of the competitor’s cars, chose one of their own, and took them both apart. They had specialists analyze all the parts and the findings were sent to a manager who assembled a report. The conclusion was that the American car parts were of superior quality…and so they were just as puzzled as they were before.

What were they missing? As Baker puts it, the method they used for their research was the same approach they took to design their cars. Neither focused on the larger system. Imagine, Baker asks, if you took the best parts from the best cars on Earth and then put them together into a single car. Would the result be the ultimate car? Far from it—it would not even run. Yet this is how most orgs think and operate.

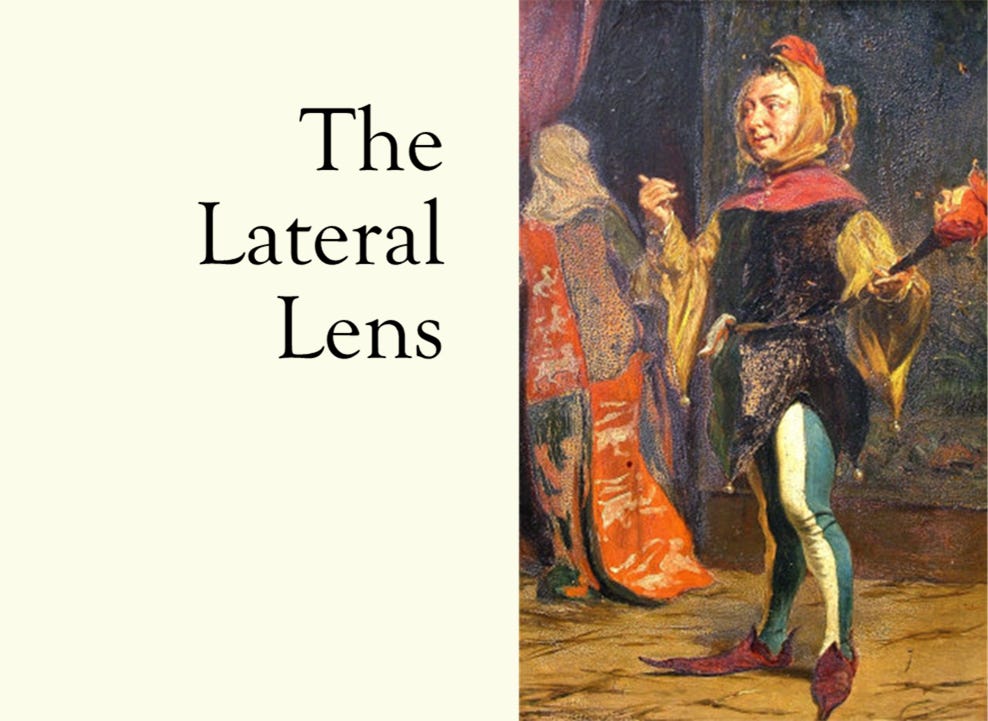

The performance of any complex system is not the sum of its parts. It must include the product of interactions, which often have indirect effects larger than initial changes made. If a system has three parts, A, B, and C, and parts A and B and parts A and C interact, then the system’s performance is not A + B + C. It would equal A + B + C + AB + AC (image from Baker’s book). Ignoring interactions and indirect effects, organizations instead tend to focus on silo optimization. This fallacy is typically baked into incentives programs.

In another of Baker’s examples, the management of the engine division of an auto manufacturer rejected a change that would reduce cost per vehicle by $80…because it would increase cost per engine by $30. Thus, a change that would save the company an average of $50 million a year was rejected because it made one division’s siloed metrics worse—but are they really to be faulted?

After all, they did what they were paid to do. Their bonuses probably weren’t tied to optimizing the system. As Goldratt famously states in The Haystack Syndrome, “Tell me how you measure me, and I will tell you how I will behave. If you measure me in an illogical way…do not complain about illogical behavior.” Or, as Baker puts it, “When the management system relies on bribes and punishments, it encourages individuals, or teams, to behave in competitive, self-optimizing, self-protective ways”, a point he powerfully makes with a series of interaction matrices (image adapted from Baker’s book).

In the example above, Purchasing, Engineering, and Assembly all make respective improvements, which holistically result in things getting worse. This will seem counterintuitive so long as one fails to think in terms of interactions.

Just as two medicines that could help you might together make you sicker, so too could departments improving themselves make overall system performance worse. In fact, to optimize the larger system, some departments or orgs or teams may need to become worse on their metrics, as illustrated in the image below (adapted from Baker’s book).

This insight should also change how performance reviews are done. (Recall the Goldratt quote above.) If you tell people that internal competition and infighting is a problem but are still clinging to the annual performance reviews and reward mechanisms that incentivize such behavior, then your “say-do ratio” is off. As Baker further argues, you’re fooling yourself by even believing you can accurately parse who caused what anyway.

This point brings us to the title of the book, a play on words referencing a golf analogy. To quote Baker, “The various components of the body cannot be individually credited for their contribution to a good golf swing.” An organization is no different, he argues. If a system is performing well, then it is illogical to try to identify the separate contributions of participants. The credit should be shared equally.

This is also one of the lessons of Deming’s famous “red bead experiment”. If you’re unfamiliar, then check out this post by Daniel Good. As Good puts it, if someone’s performance = their own contribution + the effect of the system on that contribution, then even if you theoretically could assign a value to someone’s (apparent) performance, the resulting equation is still not solvable! You’ll instead “narratize” the gaps here, ultimately basing pay on what you find to be believable stories.

Attempting to base rewards on performance likely makes little sense anyway. (Note this does not imply that everyone should be paid the same. Rewards should primarily be based on doing the right things, not on outcomes. Pay should also be based on level of expertise, seniority, skills acquired, and level of responsibility.)

It is vital to map your systems. The “system”, as you think of it, is a mental model—it is itself a form of map; and, as the General Semantics folks stress, “The map is not the territory.” Baker agrees with this: Whatever we think our system is, this is always just “the way we think about the territory, about ‘what’s out there’, although ‘what’s out there’, and what’s in our mind cannot be easily separated, if at all.”

For you, the system as you conceive of it is essentially your “mental interface” determining any interactions or interventions it will occur to you to undertake. If you aren’t mapping and improving your model of the systems at play, then your interface for making changes is left unstated, half-baked, and likely highly inaccurate and discrepant.

To improve your interventions, you must improve your mental interface.

Until next time.